Facebook and the Open Compute Project¶

Introduction¶

This article is about Yosemite. It is the second revision of the micro-server system developed by Facebook's Open Compute Project. I was fortunate to get a tour of this fabulous piece of engineering at MakerFaire 2015.

OCP - The Open Compute Project¶

Engineering any data-center gear such as servers, networking switches/routers, data storage devices, etc., involves a set of standard procedures. In essence, you design the following four:

- The Mechanicals: the external housing and mounts, the sockets into which the circuit boards are inserted, through modeling and simulations determine the optimum airflow pattern and how to cool the system

- The Electronics: the power supply, custom chips, printed circuit board and all its the peripherals

- The Firmware: for device booting, management and low-level drivers

- The Certifications: the device has to certified by appropriate agencies depending on the country you plan on selling the product in

This process, not surprisingly, is expensive and time consuming. While it is understandable that any equipment designer/maker has to protect their designs and IPs to keep their competitive edge, there are parts of any design that can be made public with minimal risk of monetary or competitive loss. In my opinion, especially in the field of networking/compute/storage equipment, the mechanicals would be a great candidate to open-source. This way the community can re-use and contribute to these designs, as against re-engineering all of this stuff from the ground up. Open-sourcing, albeit a small portion, would save time, money and lower the bar for entry into tech hardware design and manufacturing.

With OCP, Facebook in partnership with a few other companies have done precisely this. They have open-sourced the Open Rack System, parts of the Yosemite MicroServer System, the Top-of-Rack Switch and several other pieces of equipment.

Project Yosemite¶

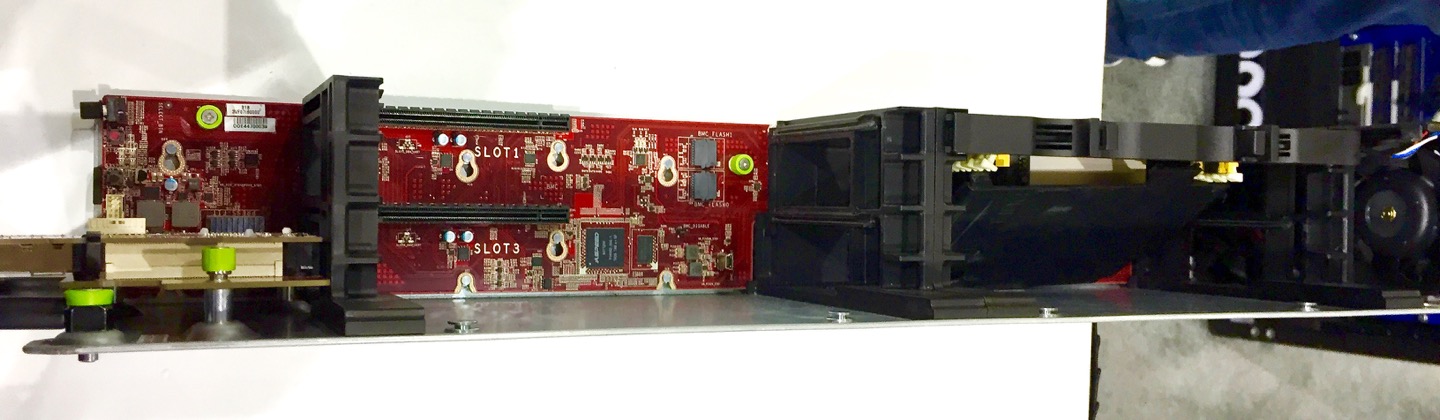

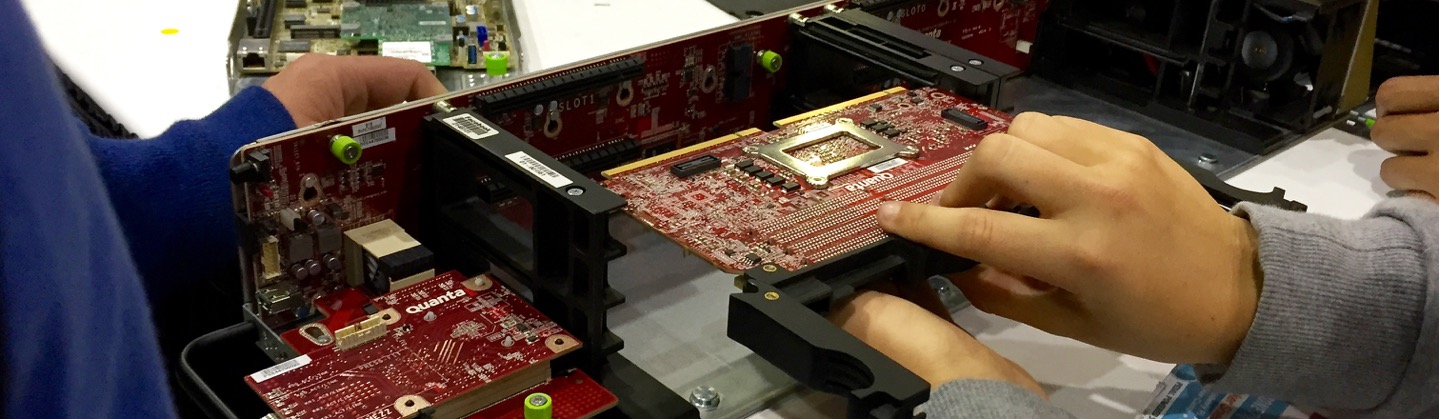

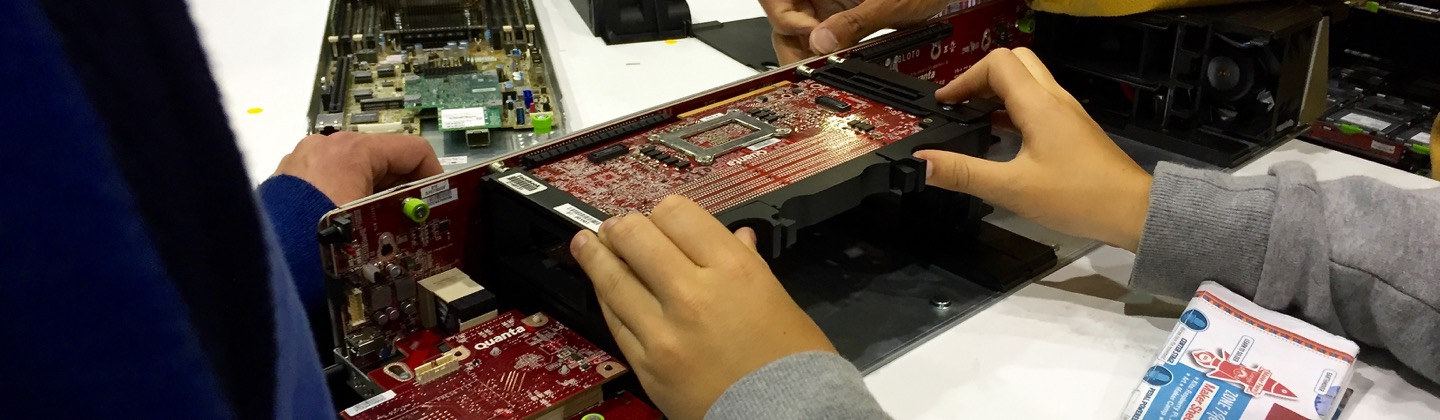

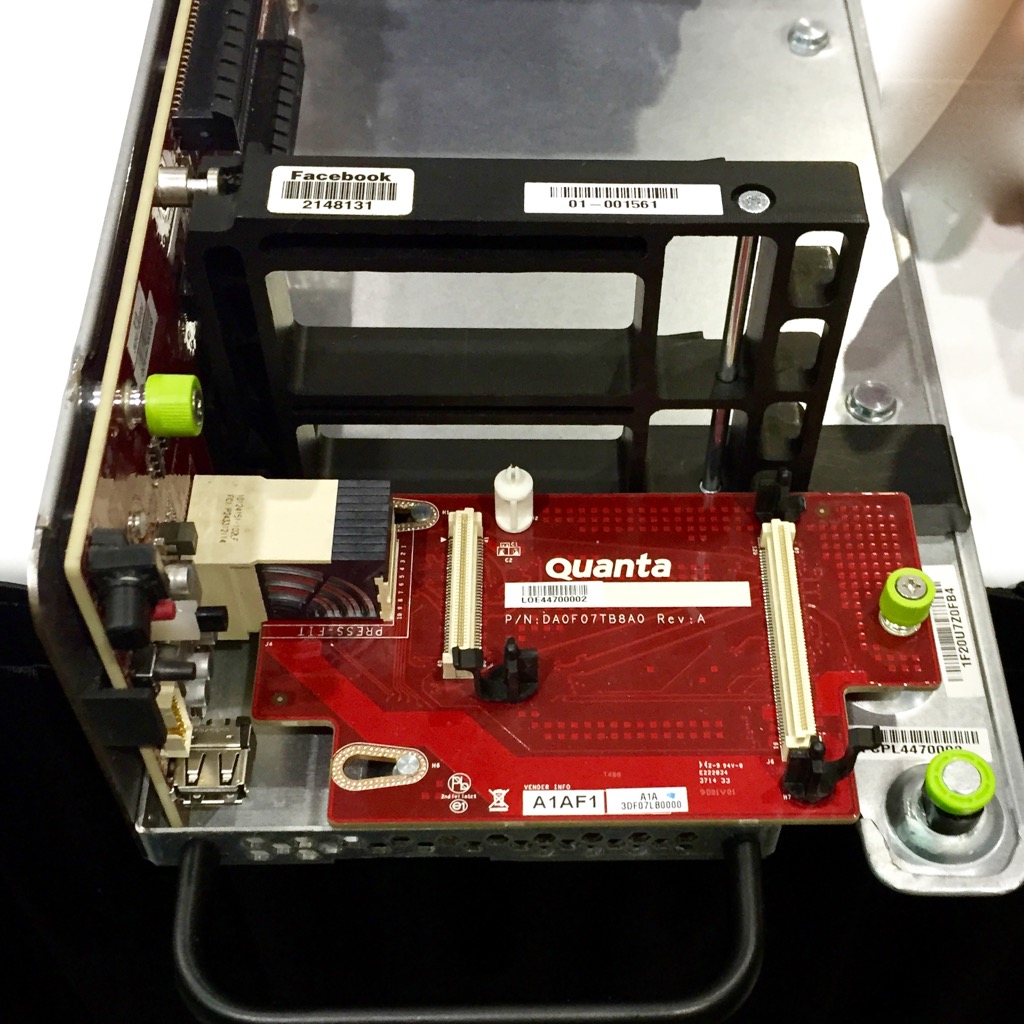

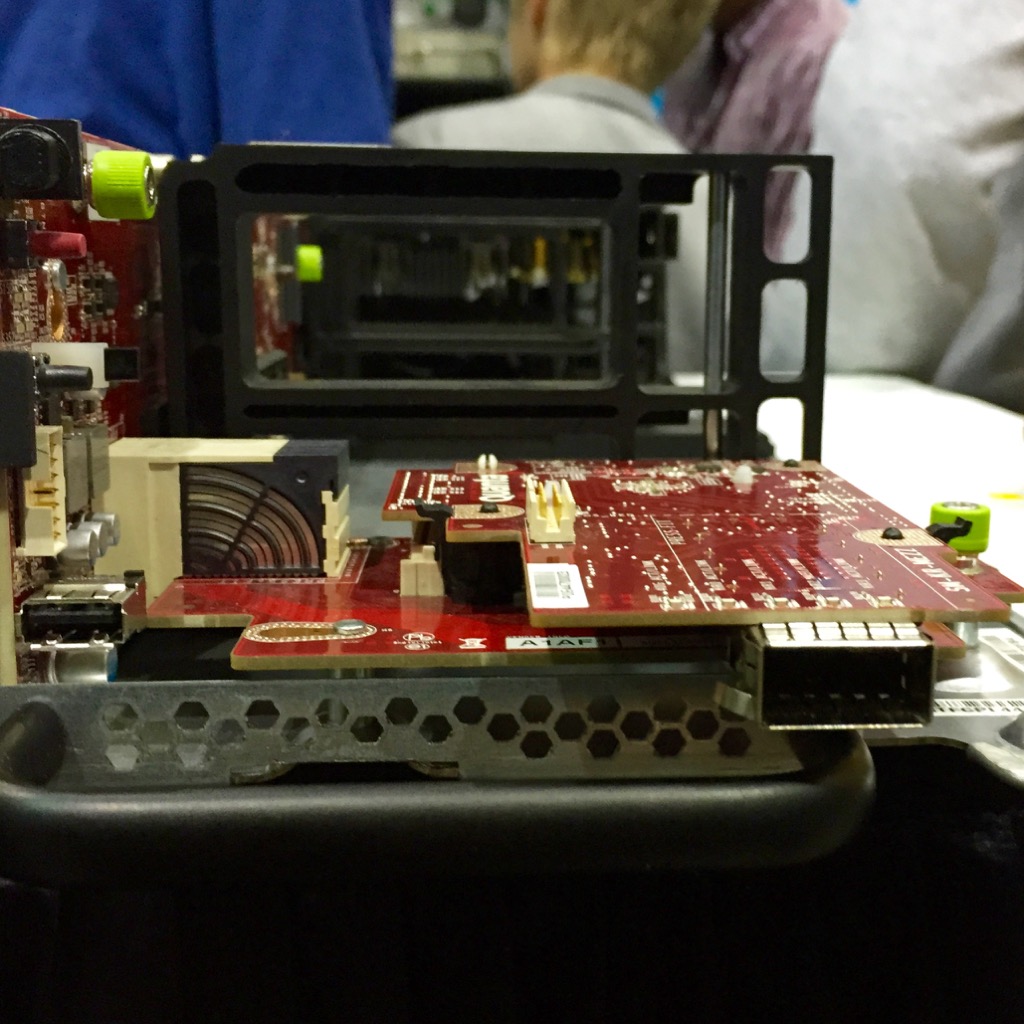

The Yosemite micro-server system is a beautiful piece of engineering. It is made up of 12 micro-server cards in a 2U footprint. The 2U system is made up of 3 sleds; each sled is 1/3 the width of the rack, and they refer to these sleds as Chassis. Each Chassis houses 4 server cards and each card runs an Intel Broadwell-DE processor.

Fans at the back make it a front-to-back cooled system.

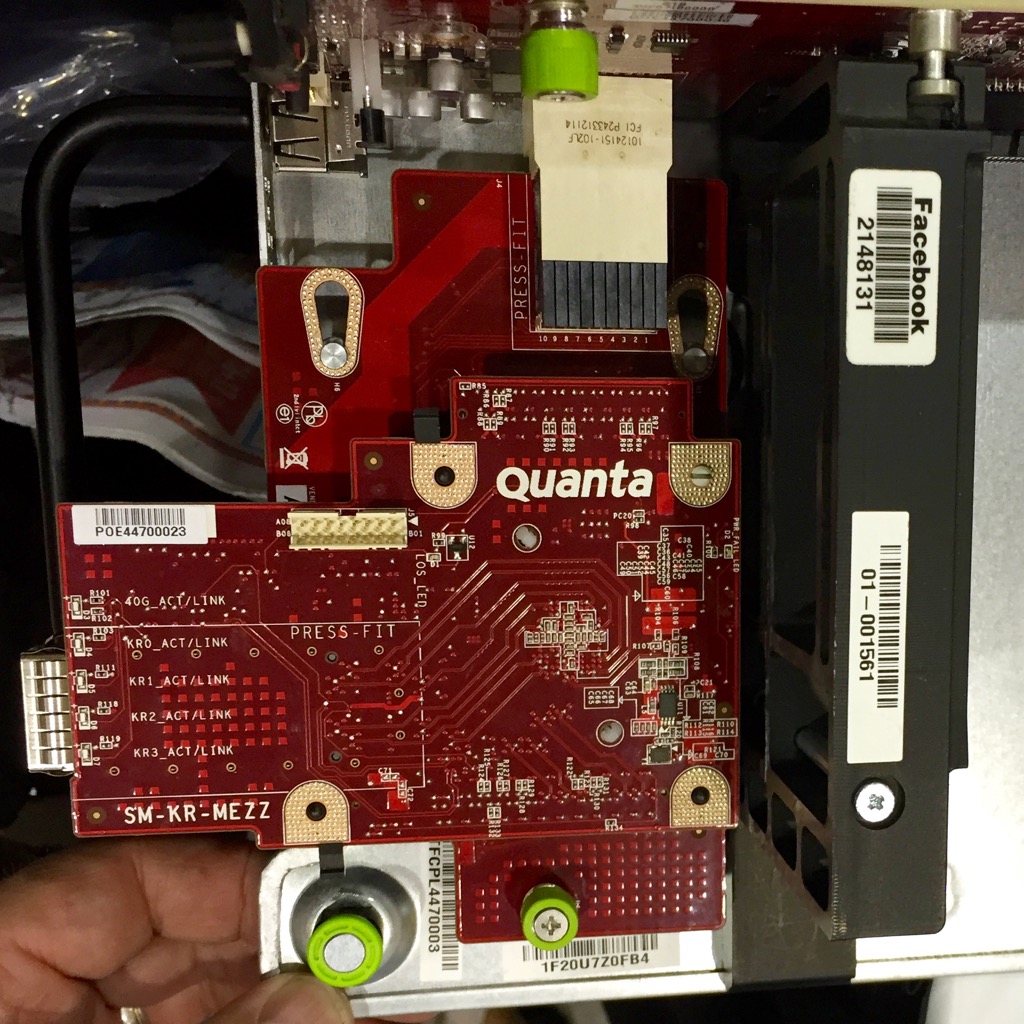

To get traffic in, each Chassis has a 40Gbps front panel port in a 4x10Gbps configuration, and the top-of-rack switch feeds this 40G port. Each 10G lane of the 40Gbps port in turn feeds one micro-server.

ODM - Original Design Manufacturer¶

Now, how does Facebook go about designing and manufacturing this gear? Facebook is essentially a hardware design house. They have a small team of hardware/system engineers who build up the specifications for the servers and then work with an Original Design Manufacturer to bring their product to life. Quanta is the ODM they worked with to design a lot of this stuff (You can see their brand on the Circuit Boards).

While not all parts of this micro-server system are open-sourced (because Quanta has to protect their IPs) you can still go to Quanta and ask them to build you a Yosemite, since Facebook has opened it up from their end.

In summary, with OCP, I think the world of Systems Engineering is heading in the right direction.

References¶

- All pictures were taken at the 2015 Bay Area MakerFaire.

- OCP server specifications and designs

- OCP networking specifications and designs

- Open Rack specifications and designs